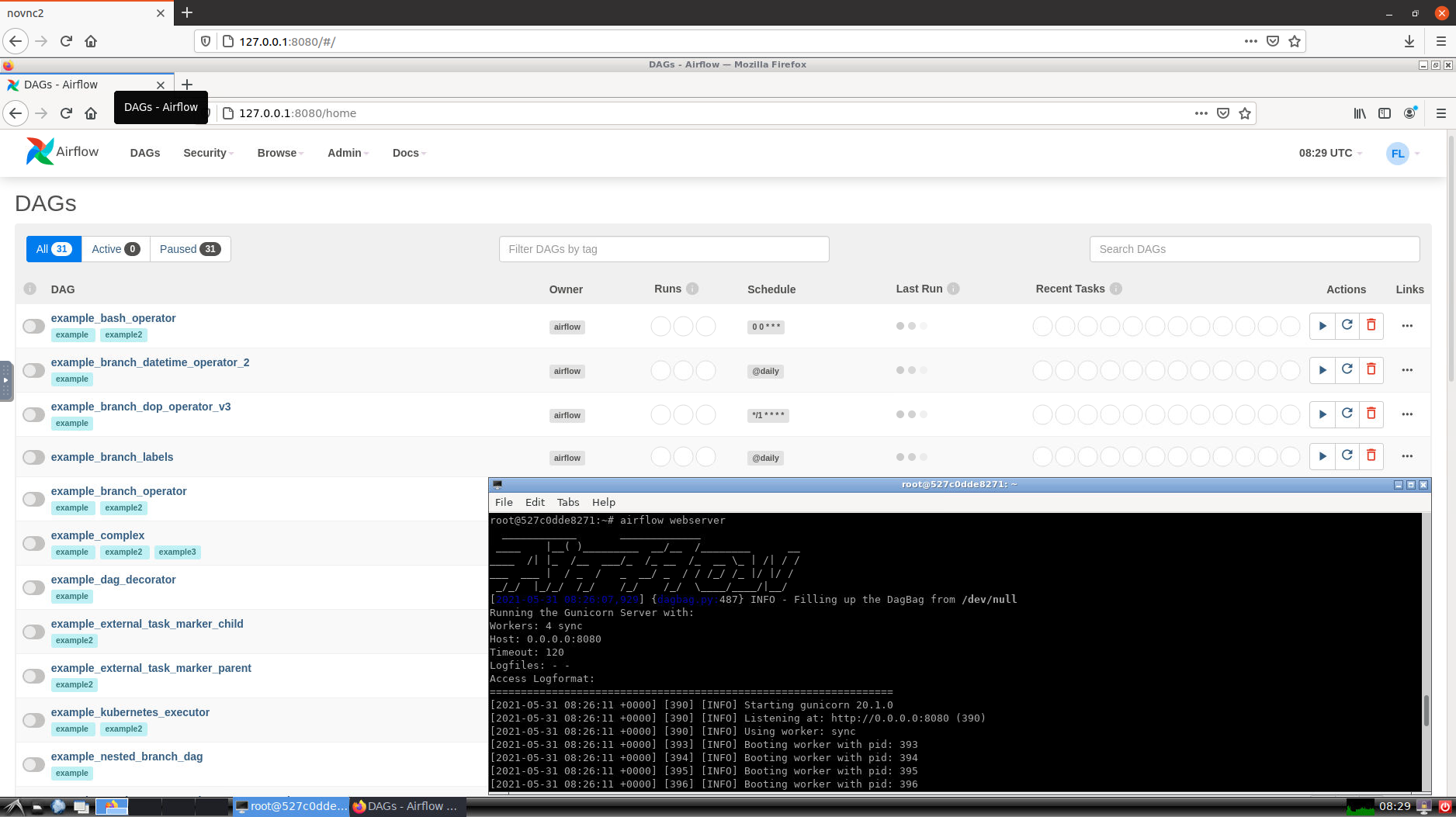

$(info Make: Unit test dags exec webserver flake8 -max-line-length 120 -max-complexity 12 -statistics down -f -f settings/.airflow-secret.yaml $(info Make: Bash in webserver exec webserver logs -f # Decrypt the airflow secret in a temporary file which is going to be mount into the -d settings/local/secret.yaml > exec webserver bash -c "python3 -f -d settings/local/secret.yaml > exec webserver bash -c "python3 -f settings/local/secret.yaml # Ugly but necessary otherwise postgres is not 10 We use it to start our stack, manage secrets and clean everything to do with the project. MakeĪ Makefile is a really good tool to automate a lot of commands. It will be used by the home made script which imports secrets into Airflow. We mount our settings/local directory into a settings directory in the webserver container to import variables / connections. settings/local/:/usr/local/airflow/settings FERNET_KEY=OGcEH00sDw8fgOZ3S21K1K0Xr8t1TPR5G_XdVt-9HHc=

We need to install a few more packages: $ cat Dockerfile You are going to see how in the Makefile. Thus, I encrypt these sensitive files with sops. I have already talked about sops and the way we use it. If you want to improve it, please be my guest. I am not a fan of what I coded, but right now it works like a charm. It imports the secrets to our local Airflow.Īn example of our local secret file before encryption: $ cat settings/local/secret.yaml Thus, to avoid having multiple kinds of sensitive files, I made a script to have the same feature as the helm chart. But when we develop locally we use Docker, so we did not have a way to easily manage our Airflow’ secrets. So the goal was to find a way to use the same file structure in all environments. It handles very well the import of Airflow’ secrets. In our integration and production environments, we are using the excellent helm chart. If you are aware of Airflow, you know there are variables and connections. Production: Airflow runs in a Kubernetes cluster.We deploy git branches on this environment with a Slack bot and Circleci. Integration: Airflow runs in a small Kubernetes cluster.Local : Airflow instance runs in Docker.For information, we use KubernetesExecutor and are big fans. Thus, our time to market has increased because of its ease. Then, we discovered Airflow, it has simplified the way we deploy and monitor our scripts. We used Terraform to deploy AWS Step functions which was not used by anybody except myself. It worked pretty well, but our developers could not easily manage their workflows autonomously. From simple python scripts to complex ETL used for our data warehouse.īefore Airflow, we had been using AWS Step Functions with AWS ECS. In our teams, we have different kind of workflows. It is easy to monitor your scripts with clean UI and notifications features like e-mail or Slack message. It helps us to automate scripts, it is python-based and has a lot of features (interact with cloud providers, FTP, HTTP, SQL, Hadoop…). Git repository with code described in this article: AirflowĪirflow is a platform to schedule and monitor workflows.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed